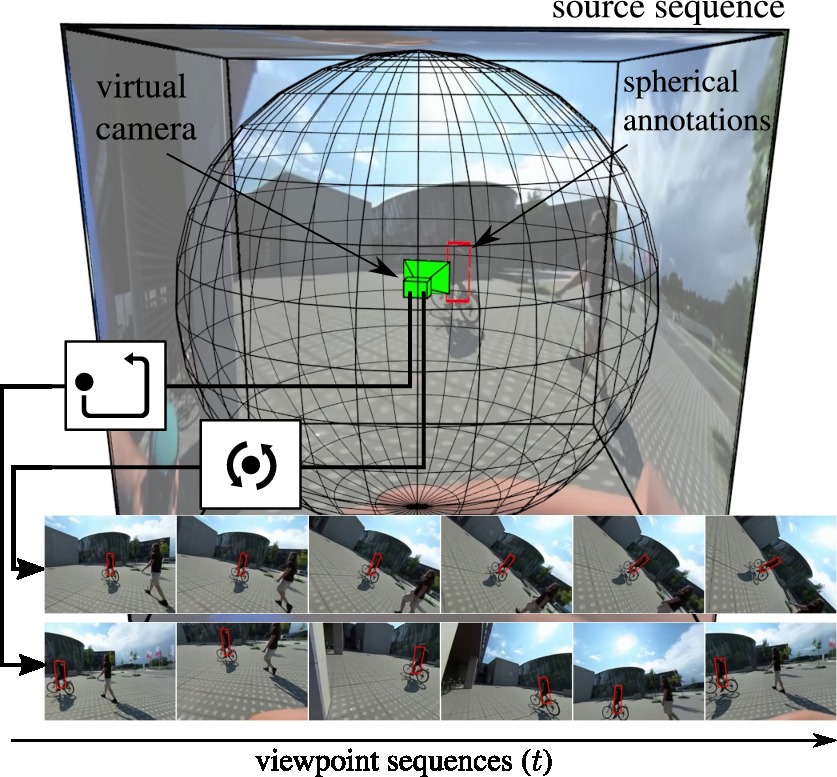

Object-to-camera motion produces a variety of apparent motion patterns that significantly affect performance of short-term visual trackers. Despite being crucial for designing robust trackers, their influence is poorly explored in standard benchmarks due to weakly defined, biased and overlapping attribute annotations. In this paper we propose to go beyond pre-recorded benchmarks with post-hoc annotations by presenting an approach that utilizes omnidirectional videos to generate realistic, consistently annotated, short-term tracking scenarios with exactly parameterized motion patterns. We have created an evaluation system, constructed a fully annotated dataset of omnidirectional videos and generators for typical motion patterns.

Dataset

The AMP dataset is available here. It contains 15 omnidirectional sequences annotated with object regions in spherical space.

Software

The experiments for the paper were performed using the PreTZel framework and the VOT toolkit. The repository of the PreTZel framework contains instructions on how to setup the software and contains experiment stack for the AMP benchmark.

Results

The raw results of the AMP benchmark evaluation from the ICCV2017 paper are available here. Please note that some when trying to recreate the results for the trackers that have already been evaluated some minor differences may occur due to the change of the evaluation software that resulted in minor changes in rounding and interpolation.